Scaling Data Center Fabrics for Trillion-Parameter AI Models

From legacy 10/100GbE to 800G — the hardware, software, and topology decisions that define the AI Factory of the next decade.

1. Executive Context: The Inflection Point in Data Center Architecture

The industry is currently navigating a fundamental shift in infrastructure design, moving beyond the limitations of general-purpose networking. The transition from legacy 10/100GbE systems to 400G and 800G fabrics is a mandatory evolution necessitated by the sheer computational gravity of generative AI.

Standard networking paradigms fail at the trillion-parameter scale because they lack the synchronous communication capabilities required for distributed training. When training models of this magnitude, the network is no longer a peripheral component; it is the "backplane" of the AI Factory.

Modern AI workloads demand a radical rethink of latency, bandwidth density, and thermal constraints. High-performance fabrics must now resolve the "tail latency" issues that can stall thousands of GPUs, while simultaneously managing the extreme thermal loads generated by 800G port densities. This blueprint synthesizes the strategic hardware and software shifts required to navigate this transition, utilizing next-generation platforms like NVIDIA Quantum and Dell PowerSwitch technologies to ensure long-term infrastructure viability.

2. From Legacy to 800G: Evaluating the High-Density Hardware Foundation

For the C-suite, the choice of network hardware is a choice regarding the ultimate limits of computational scale. Strategic architects prioritize "high-radix" switches—devices with high port counts—to collapse the network hierarchy, reduce "network hops," and improve job locality. Minimizing these hops is vital for maintaining the nanosecond-level latency required for synchronous AI training.

| Specification | Dell Z9864F-ON | NVIDIA Quantum-2 (QM9700) | NVIDIA Quantum-X800 (Q3400-RA) |

|---|---|---|---|

| Port Density | 64 x 800GbE (OSFP112) | 64 x 400Gb/s (NDR) | 144 x 800Gb/s |

| Aggregate Throughput | 102.4 Tb/s (Full Duplex) / 51.2 Tb/s (Non-blocking) | 51.2 Tb/s | 115.2 Tb/s |

| Form Factor | 2RU | 1RU | 4RU |

The Strategic Value of 800GbE in the Agentic AI Era

As we enter the era of Agentic AI—where autonomous systems require continuous, high-bandwidth interaction with massive datasets—moving to 800G is a competitive necessity. Platforms like the NVIDIA Quantum-X800 and Dell Z9864F-ON enable 2X faster speeds and significantly higher effective bandwidth than their 400G predecessors. This density allows for superior performance isolation in multi-tenant environments, ensuring that diverse AI agents can operate concurrently without resource contention.

3. The Open Networking Mandate: Disaggregation and Enterprise SONiC

To maximize Total Cost of Ownership (TCO) and prevent vendor lock-in, the modern AI fabric must be built on disaggregated principles. Separating the networking hardware from the operating system allows enterprises to optimize the fabric specifically for AI/ML workloads rather than general-purpose traffic.

Enterprise SONiC and ONIE

The strategic shift centers on Enterprise SONiC and the Open Network Install Environment (ONIE). ONIE facilitates "Zero Touch Installation," enabling the automated deployment of a network operating system (NOS) at scale. Enterprise SONiC provides the specialized functionality required for AI—specifically RDMA (Remote Direct Memory Access) and RoCEv2 support—which are essential for the lossless, high-speed data transfers required for GPU-to-GPU communication.

Observability and the Lifecycle Strategy

Disaggregation enhances "Network Observability," allowing architects to treat the network as a programmable entity. Platforms like the Dell Z9864F-ON integrate with Dell SmartFabric Manager, providing deep telemetry and automating lifecycle management. However, from a strategic planning perspective, it is important to note that full feature functionality for "adaptive routing" and "cognitive routing" on the Z9864F-ON are roadmap enhancements scheduled for future software releases.

4. Topology Engineering: Optimizing for Extreme Scalability

In the AI Factory, traditional hierarchical designs are being replaced by high-radix topologies engineered for massive parallelization.

Fat Tree Topology

The gold standard for extreme-scale deployments (10,000+ nodes) due to its massive redundancy and non-blocking nature. Ensures every GPU can communicate with any other GPU at full line rate with predictable reliability in a two-tier design.

SlimFly Topology

A high-radix design focused on reducing cost and cabling complexity by minimizing the number of switches and interconnects. Offers significant TCO advantages but requires more complex routing algorithms compared to Fat Tree.

The Strategic Bridge: Q3200-RA

For organizations not yet ready for a full 4RU, 144-port deployment, the NVIDIA Quantum-X800 Q3200-RA (housing two independent 36-port 800G switches in a 2U enclosure) serves as a strategic bridge. It is ideal for connecting new 800G compute clusters to existing previous-generation storage infrastructure, allowing for a phased transition.

5. In-Network Computing: Offloading the Computational Burden

Achieving trillion-parameter scale requires moving compute operations from the server to the network itself. This "In-Network Computing" strategy minimizes data traversal, reducing the congestion that typically occurs during large data aggregations.

NVIDIA SHARP Technology

The Scalable Hierarchical Aggregation and Reduction Protocol (SHARP) is the critical technology for offloading collective operations.

4th Gen SHARP (Quantum-X800)

Introduces FP8 precision and support for ReduceScatter/ScatterGather operations. Boosts application performance by up to 9X by offloading collective operations to the switch fabric.

By offloading collective operations, the Quantum-X800 allows the most expensive assets in the data center—the GPUs—to focus entirely on computation rather than managing communication overhead, significantly improving the ROI of the compute cluster.

6. Physical Layer & Thermal Management: Airflow vs. Liquid Cooling

At 800G densities, power consumption per switch can reach nearly 3000W, making thermal management a core networking strategy rather than a facility concern.

Air-Cooled Systems

Modern switches like the Dell Z9864F-ON and NVIDIA QM9700 utilize versatile airflow configurations—P2C (Power to Connector) or C2P (Connector to Power)—to integrate with existing hot/cold aisle designs.

Liquid-Cooled Systems

For extreme densities, liquid cooling is the only viable path. The NVIDIA Quantum-X Photonics (Q3450-LD) is 85% liquid-cooled, enabling extreme port density in environments where air cooling is thermally insufficient.

Co-Packaged Optics (CPO) and TCO

The transition to Co-Packaged Optics (CPO) represents a breakthrough in TCO. By integrating silicon photonics directly with the switch ASIC, CPO eliminates the need for pluggable transceivers. This reduces failure points, improves serviceability, and slashes electrical loss. CPO technology reduces the electrical path to millimeters, resulting in 63X better signal integrity and reducing insertion loss from 22 dB to approximately 4 dB. This leads to a more resilient fabric with drastically reduced power-per-bit costs.

7. Strategic Implementation Roadmap

A successful transition to 800G requires a phased approach that balances immediate performance needs with long-term infrastructure viability.

Fabric Assessment & Telemetry

Deploy advanced monitoring tools like NVIDIA UFM (Unified Fabric Manager) or Dell SmartFabric Manager. Comprehensive telemetry is required to monitor fabric health and preventatively troubleshoot the congestion issues inherent in high-density AI training.

Converged Infrastructure

Architects should prioritize merging LAN and SAN traffic to reduce management complexity. Utilizing platforms like the Dell S4148U—which enables convergence of LAN and SAN traffic by supporting FC8, FC16, and FC32—reduces the "blast radius" of management errors and significantly lowers the RU footprint in high-density racks.

Future-Proofing through Multi-Rate Connectivity

Implement hardware that supports 100/200/400/800G multi-rate ports. This allows for a gradual migration, utilizing breakout cables to connect new 800G clusters to existing storage without a forklift upgrade.

Platforms Referenced in This Blueprint

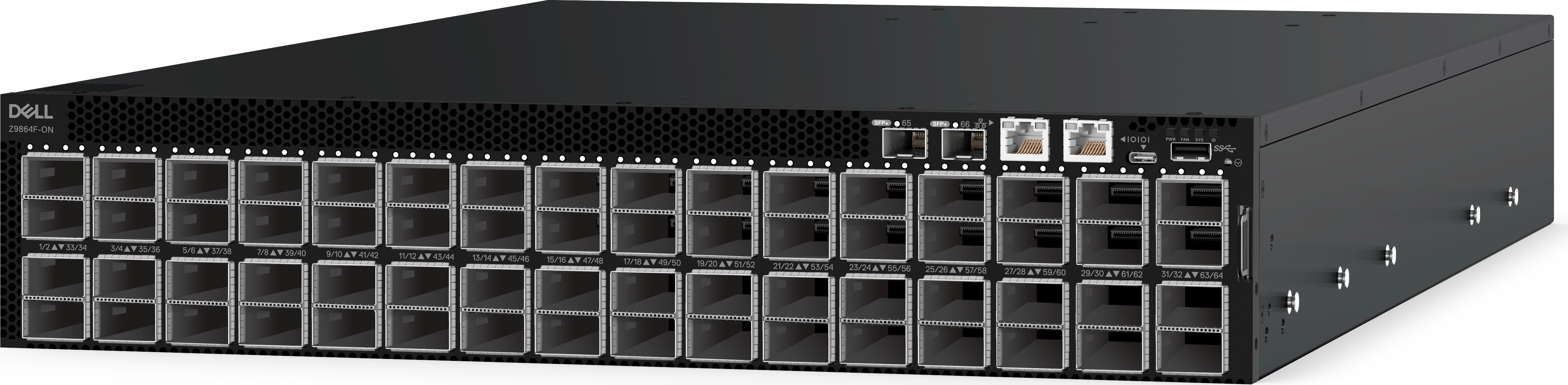

PowerSwitch Z9864F-ON

64x 800GbE • 51.2 Tbps • 2RU • Enterprise SONiC

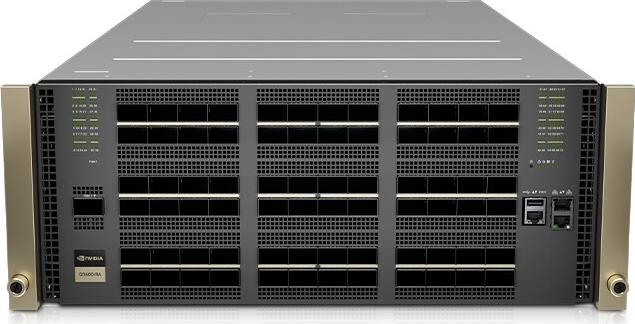

Quantum-X800 Q3401-RD

144x 800Gb/s • 115.2 Tbps • 4th Gen SHARP

Quantum-X800 Q3400-RA

144x 800Gb/s • 115.2 Tbps • Air-Cooled

Quantum-X800 Q3200-RA

72x 800Gb/s • 57.6 Tbps • Strategic Bridge

Quantum-2 QM9790

64x 400Gb/s • 25.6 Tbps • SHARPv3

Quantum-2 QM9701

64x 400Gb/s • 25.6 Tbps • SHARPv3

Quantum-2 QM9700

64x 400Gb/s • 25.6 Tbps • NDR

PowerSwitch S4112T-ON

12x 10GBase-T • 840 Gbps • Management

PowerSwitch S4148U

LAN/SAN Convergence • FC8/16/32 • Legacy

Ready to architect your 800G AI fabric?

Our networking specialists can help you evaluate platforms and design a phased migration plan.

Request a Quote Read: Ethernet vs. InfiniBand